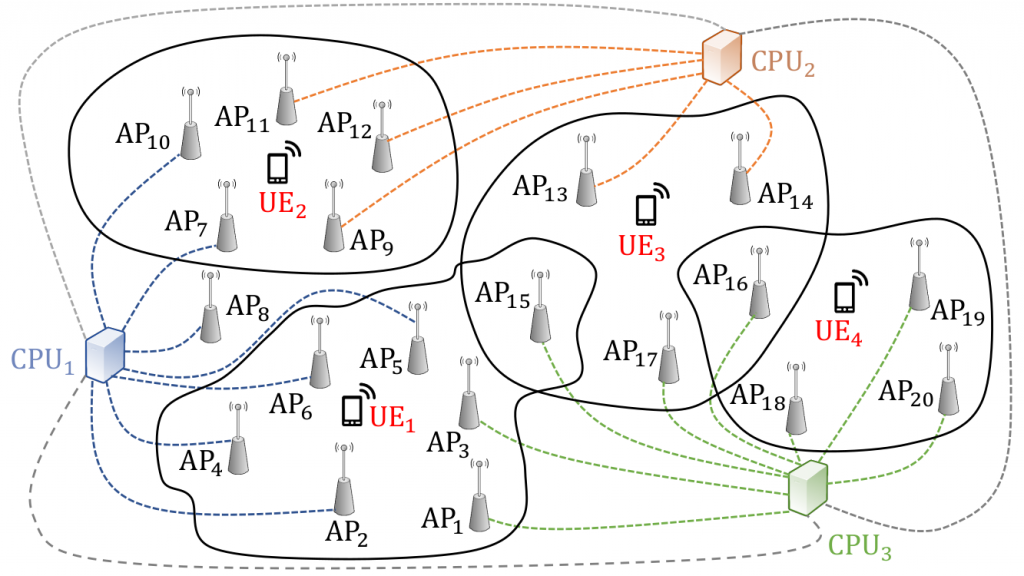

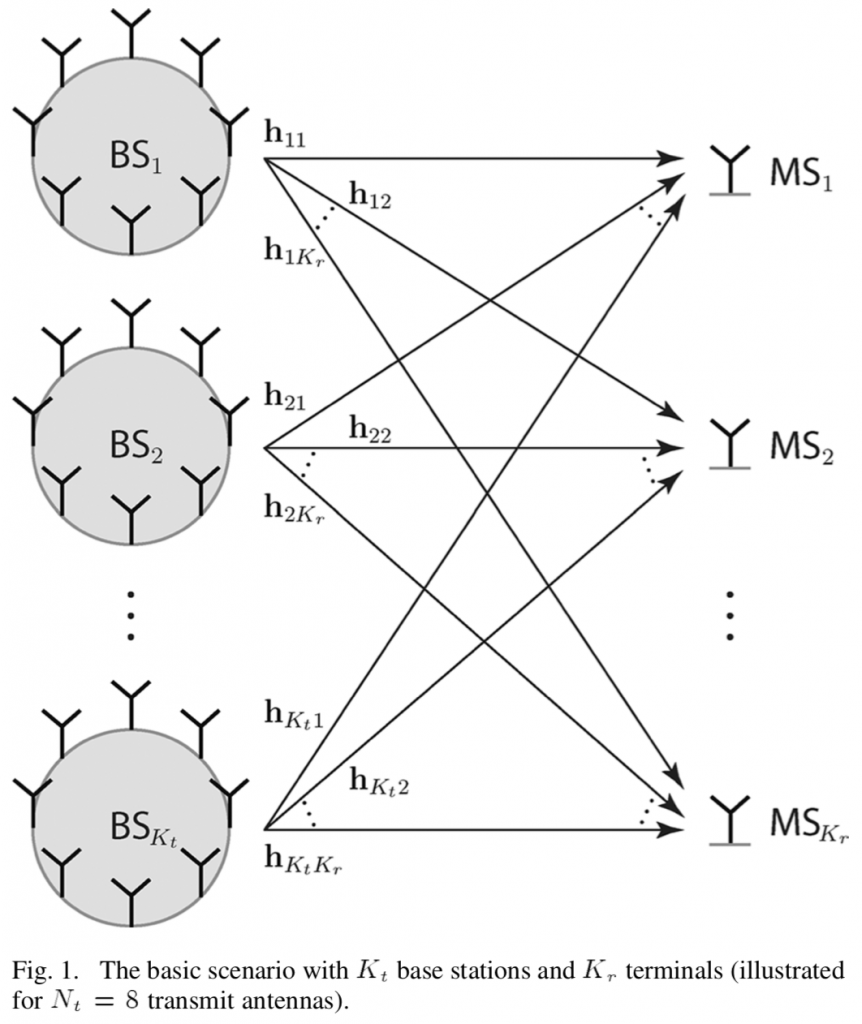

The new Cell-Free Massive MIMO concept has its roots in the classical Network MIMO concept, and has also been given many other names over the years (e.g., coordinated multipoint). When I started my research on the topic in 2009, the standard assumption was that a set of base stations were jointly transmitting to a set of users by sharing both the data signals and their respective channel state information (CSI). In my first journal paper, we showed that one can get away with only sharing the data signals between the base stations because each one only needs local CSI (between itself and the users) to beamform to the users. The price to pay is that the base stations cannot cancel each others’ interference, so each one should preferably have multiple antennas so it can control how much interference it causes. This was my first well-cited paper but, to be honest, I am still not sure how significant results are.

On the one hand, it is very convenient to only utilize local CSI at every base station, because it can be estimated from uplink pilots in a TDD system, which was a key motivation behind our 2010 paper. The time-critical precoding computation can then be initiated immediately after the pilots have been received, instead of waiting for the CSI to be shared between the base stations. This property was later utilized in the first Cell-Free Massive MIMO papers [Ngo, Nayebi] to alleviate the need for sharing CSI.

On the other hand, CSI is usually a small fraction of the signaling between a base station and the rest of the system in Network MIMO. The majority of the signaling consists of the data signals; for example, if a coherence block with 200 channel uses consists of 20 pilot symbols and 180 data symbols, then there is 180/20 = 9 times more data than CSI. Interestingly, our recent paper “Making Cell-Free Massive MIMO Competitive With MMSE Processing and Centralized Implementation” shows that if Cell-Free Massive MIMO is implemented by sending all CSI to an edge-cloud processor that takes care of all the signal processing, both the communication performance and the signaling load can be greatly improved as compared to the fully distributed approach (which was considered in my 2010 paper and then became the standard assumption in the Cell-Free Massive MIMO literature).

The bottom line is that it is hard to make a distributed network implementation competitive compared to a centralized one. Unless we can find a really clever implementation, there is a risk that we lose too much in communication performance and also raise the fronthaul capacity requirements.