The academic research into wireless communication is fast-paced, enabled by the fact that one can write a paper in just a few months if it only involves mathematical derivations and computer simulations. This feature can be a strength when it comes to identifying new concepts and developing know-how, but it also leaves a lot of research results unproven in the real world. Even if the math is correct, the underpinning models simplify the physical world. The models can have served us well in the past, but might have to be refined to keep delivering accurate insights as wireless technology becomes more advanced. This is why experimental validation is essential to build credibility behind new wireless functionalities.

Unfortunately, there are many disadvantages to being an experimental researcher in the wireless communication community. It takes longer to gather material for publications, the required hardware equipment makes the research much more expensive, and experimental results are seldom given the recognition they deserve (e.g., when awards and citations are handed out). As a result, theoretical works dominate ComSoc’s scientific journals.

The dominance of purely theoretical contributions means that we can accidentally build an entire house of cards of an emerging concept before we have validated experimentally that the foundation is solid. We can take the pilot contamination phenomenon as an example: hundreds of theoretical papers analyzed its consequences in the last decade and devised algorithms to mitigate it. However, I have not seen any experimental work validating any of it.

Experiments on Reconfigurable Intelligent Surfaces Get Recognition

In recent years, the most hyped new technology is reconfigurable intelligent surfaces (RIS). My research on this topic started five years ago when I became suspicious of the claims and modeling provided in some early works. We addressed these issues in the paper “Reconfigurable Intelligent Surfaces: Three Myths and Two Critical Questions” in 2020, but I remained skeptical of the technology’s maturity until later that year. That is when a group at the University of Surrey published a video showcasing an experimental proof-of-concept in an indoor scenario.

Further experimental results were disseminated right after that. 2024 is a special year: IEEE ComSoc decided to award two of these works with their finest awards for journal publications, thereby recognizing the importance of elevating the technology readiness level (TRL) through validation and field trials.

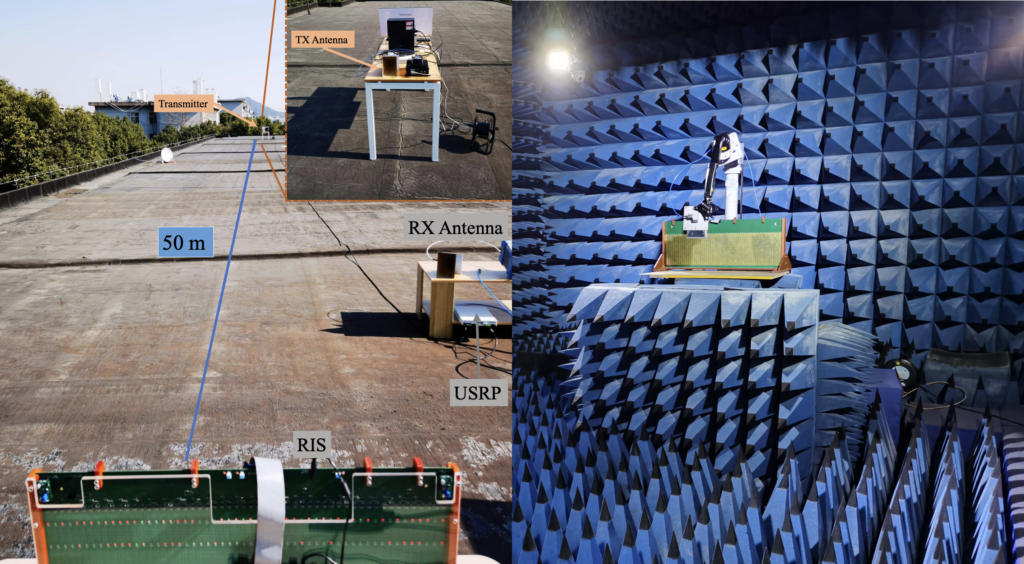

The IEEE Marconi Prize Paper Award went to the paper “Wireless Communications With Reconfigurable Intelligent Surface: Path Loss Modeling and Experimental Measurement“. This paper validated the theoretical near-field pathloss formulas for RIS-aided communications through measurements in an anechoic chamber.

The IEEE ComSoc Stephen O. Rice Prize went to the paper “RIS-Aided Wireless Communications: Prototyping, Adaptive Beamforming, and Indoor/Outdoor Field Trials“. This paper raised the TRL to 5 by demonstrating the use of the technology in a real WiFi network using existing power measurements for over-the-air RIS configuration. Experiments were made in both indoor and outdoor scenarios. I thank Prof. Haifan Yin and his team at Huazhong University of Science and Technology for involving me in this prototyping effort.

Thanks to experimental works like these, we know that the RIS technology is practically feasible to build, the basic theoretical formulas match reality, and an RIS can provide substantial gains in the intended deployment scenarios. However, if the technology should be used in 6G, we still need to find a compelling business case—this was one of the critical questions posed in my 2020 paper and it remains unanswered.