The support for mmWave spectrum is a key feature of 5G, but mmWave communication is also known to be inherently unreliable due to the blockage and penetration losses, as can be demonstrated in this simple way:

This is why the sub-6 GHz bands will continue to be the backbone of the future 5G networks, just as in previous cellular generations, while mmWave bands will define the best-case performance. A clear example of this is the 5G deployment strategy of the US operator Sprint, which I heard about in a keynote by John Saw, CTO at Sprint, at the Brooklyn 5G Summit. (Here is a video of the keynote.)

Sprint will use spectrum in the 600 MHz band to achieve wide-spread 5G coverage. This low frequency will enable spatial multiplexing of many users if Massive MIMO is used, but the data rates per user will be rather limited since only a few tens of MHz of bandwidth is available. Nevertheless, this band will define the guaranteed service level of the 5G network.

In addition, Sprint has 120 MHz of TDD spectrum in the 2.5 GHz band and are deploying 64-antenna Massive MIMO base stations in many major cities; there will be more than 1000 sites in 2019. These can both be used to simultaneously do spatial multiplexing of many users and to improve the per-user data rates thanks to the beamforming gains. John Saw pointed out that the word “massive” in Massive MIMO sounds scary, but the actual arrays are neat and compact in the 2.5 GHz band. He also explained that this frequency band supports high mobility, which is very challenging at mmWave frequencies. The mobility support is demonstrated in the following video:

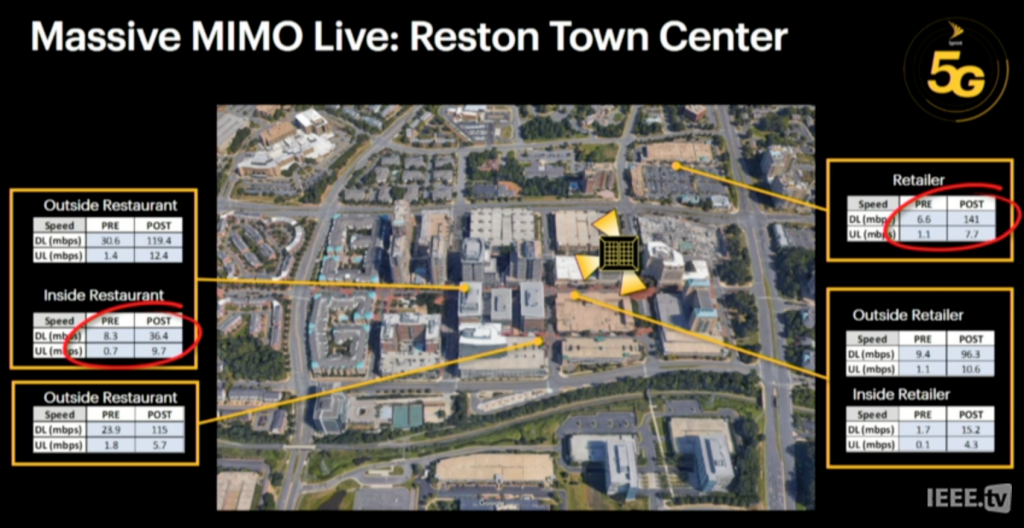

The initial tests of Sprint’s Massive MIMO systems pretty much confirm the theoretical predictions. In Plano, Texas, a 3.4x gain in downlink sum rates and 8.9x gain in uplink sum rates were observed when comparing 64-antenna and 8-antenna panels. These gains come from a combination of spatial multiplexing and beamforming; this is particularly evident in the uplink where the rates increased faster than the number of antennas. Recent measurements at the Reston Town Center, Virginia, showed similar gains: between 4x and 20x improvements at different locations (see the image below).

Tom Marzetta, the originator of Massive MIMO, attended the keynote and gave me the following comment: “It is gratifying to hear the CTO of Sprint confirm, through actual commercial deployments, what the advocates of Massive MIMO have said for so long.”

Interestingly, Sprint noticed that their customers immediately used more data when Massive MIMO was turned on, and there were more simultaneous users in the network. This demonstrates the fact that whenever you create a more capable cellular network, the users will be encouraged to use more data and new use cases will gradually appear. This is why we need to continue looking for ways to improve the spectral efficiency beyond 5G and Massive MIMO.

Emil, where can I find the most reliable reference in using TDD for 5G?

Standard documents are hard to read and fully grasp. I would recommend one of the textbooks that are written by people from the industry. For example “5G NR: The Next Generation Wireless Access Technology” by Dahlman, Parkvall and Sköld.

Thank you, Emil. Do you think if there is any possibility to have Massive MIMO FDD and TDD share the same hardware? I understand that the operating frequency will be different in FDD uplink and downlink, but if the hardware is designed for ultra-wideband TDD, then can it be used with partial bandwidth to operate in FDD mode as well?

I don’t know the electronic design well enough to give a full answer. What I can say is that an FDD system needs to protect the receiver hardware so that the transmitted signal (which is much stronger than the received signal) does not leak into the receiver. The components used for that (e.g., analog filters) are not needed in TDD systems, which might make it hard to use TDD hardware for FDD communications.

I simulated a massive MIMO system with 28 users and 30 BS antennas, simulation graph shows that the sum-rate firstly increase up to user 15 and then decreases as it approaches to maximum number of antennas (after user 15 it decreases steadily up to user 28. What is the reason behind this result?

(sum-rate is on the y-axis and the number of users is on the x-axis.)

This phenomenon is explained in detail in Section 7.2 of my book Massive MIMO networks (download http://massivemimobook.com)

In short, the interference increases as we add more users into the system, so the rate per user decreases. For a while, the sum rate is anyway increasing since the rate of the new users partially compensates for the rate loss among the existing ones, but after a certain point, the rates of the existing users decrease so much that it doesn’t help that we get one more user.