The bit rate (bit/s) is so tightly connected with the bandwidth (Hz) that the computer science community uses these words interchangeably. This makes good sense when considering fixed communication channels (e.g., cables) for which these quantities are proportional with the bandwidth efficiency (bit/s/Hz) being the proportionality constant. However, when dealing with time-varying wireless channels, the spectral efficiency can vary by orders-of-magnitude depending on the propagation conditions (e.g., between cell center and cell edge), which weakens the connection between the rate and bandwidth.

The peak rate of a 4G device can reach 1 Gbit/s and 5G devices are expected to reach 20 Gbit/s. These numbers greatly surpass the need both for the most demanding contemporary use cases, such as the 25 Mbit/s required by 4k video streaming, and for envisioned virtual reality applications that might require a few hundred Mbit/s. One can certainly imagine other futuristic applications that are more demanding, but since there is a limit to how much information the human perception system can process in real-time, these are typically “data shower” situations where a huge dataset must be momentarily transferred to/from a device for later utilization or processing. I think it is fair to say that future networks cannot be built primarily for such niche applications, thus I made the following one-minute video claiming that wireless doesn’t need more bandwidth but higher efficiency, so that we can deliver bit rates close to the current peak rates most of the time instead of under ideal circumstances.

Why are people talking about THz communications?

The spectral bandwidth has increased with every wireless generation so naturally, the same thing will happen in 6G. This is the kind of argument that you might hear from proponents of (sub-)THz communications, which is synonymous with operating at carrier frequencies beyond 100 GHz where huge bandwidths are available for utilization. The main weakness with this argument is that increasing the bandwidth has never been the main goal of wireless development but only a convenient way to increase the data rate.

As the wireless data traffic continues to increase, the main contributing factor will not be that our devices require much higher instantaneous rates when they are active, but that more devices are active more often. Hence, I believe the most important performance metric is the maximum traffic capacity measured in bit/s/km2, which describes the accumulated traffic that the active devices can generate in a given area.

The traffic capacity is determined by three main factors:

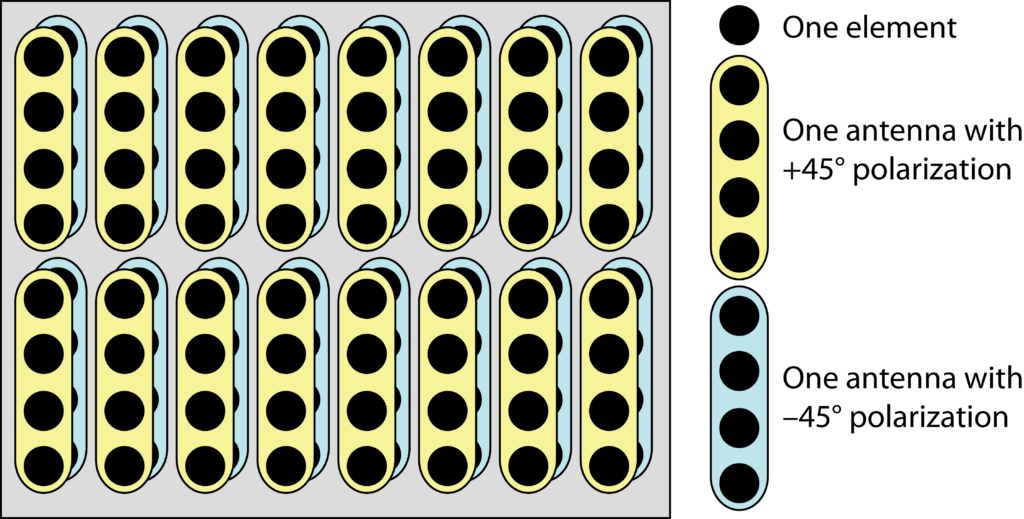

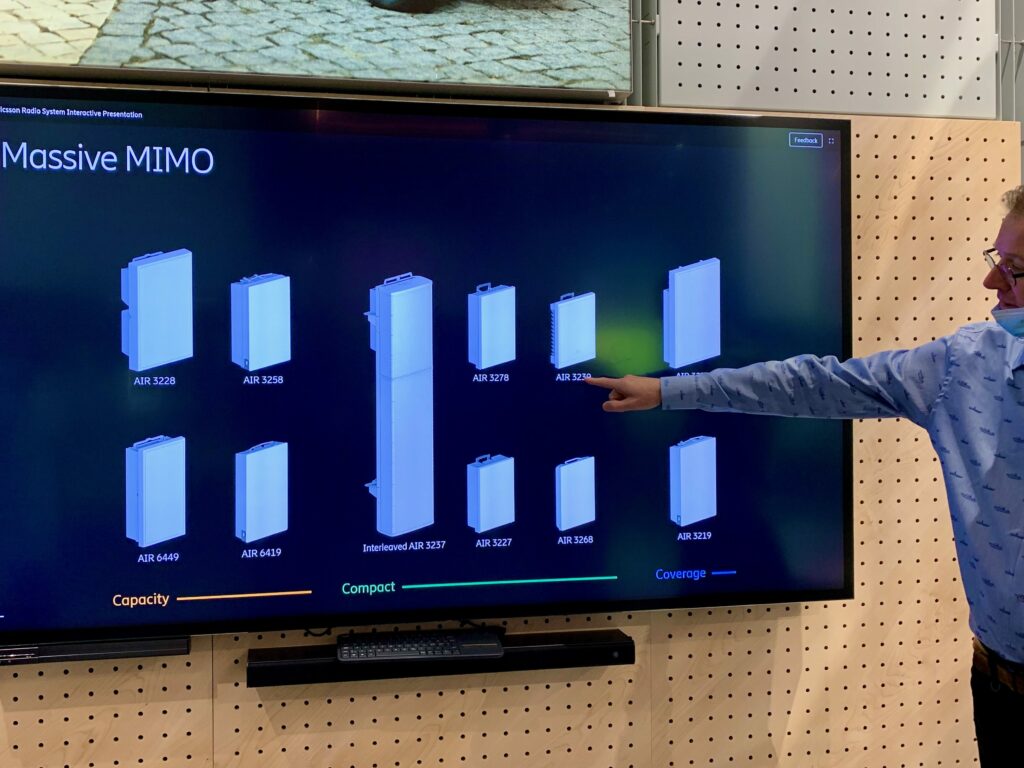

- The number of spatially multiplexed devices:

- The bandwidth efficiency per device; and

- The bandwidth.

We can certainly improve this metric by using more bandwidth, but it is not the only way and it mainly helps users that have good channel conditions. The question that researchers need to ask is: What is the preferred way to increase the traffic capacity from a technical, economical, and practical perspective?

I don’t think we have a conclusive answer to this yet, but it is important to remember that even if the laws of nature stay constant, the preferred solution can change with time. A concrete example is the development of processors, for which the main computing performance metric is the floating-point operations per second (FLOPS). Improving this metric used to be synonymous with increasing the clock speed, but this trend has now been replaced with increasing the number of cores and using parallel threads because it leads to more power- and heat-efficient solutions than increasing the clock speed beyond the current range.

The corresponding development in wireless communications would be to stop increasing the bandwidth (which determines the sampling rate of the signals and the clock speed needed for processing) and instead focus on multiplexing many data streams, which take the role of the threads in this analogy, and balancing the bandwidth efficiency between the streams. The following video describes my thoughts on how to develop wireless technology in that direction:

As a final note, as the traffic capacity in wireless networks increase, there will be some point-to-point links that require huge capacity. This is particularly the case between an access point and the core network. These links will eventually require cables or wireless technologies that can handle many Tbit/s and the wireless option will then require THz communications. The points that I make above apply to the wireless links at the edge, between devices and access points, not to the backhaul infrastructure.