One of the physical-layer technologies that have received a lot of attention from the research community in recent years is called Non-Orthogonal Multiple Access (NOMA). For instance, it has been called “A Paradigm Shift for Multiple Access for 5G and Beyond“.

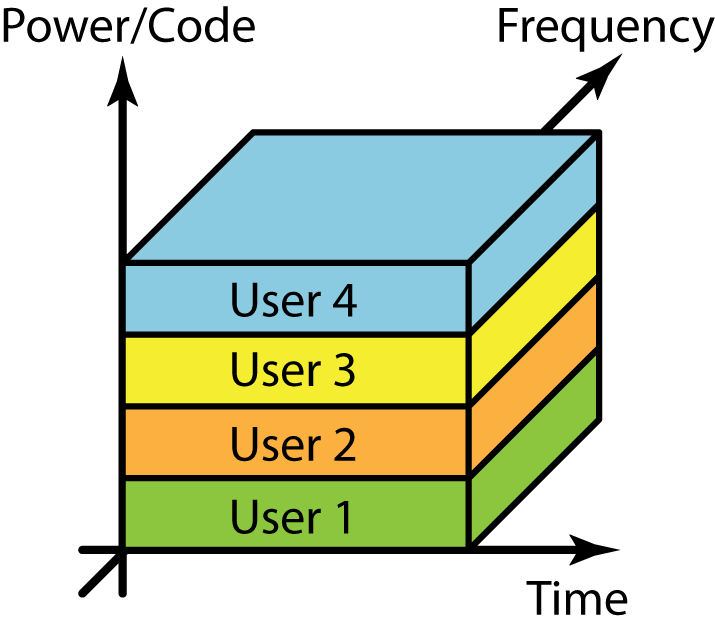

The core idea of NOMA is to assign the same time-frequency resource to multiple users, and instead (partially) separate the users in the power or code domain. This is illustrated in the figure and stands in contrast to the classic approach of assigning orthogonal resources to the users, which was done in 4G using Orthogonal Frequency-Division Multiple Access (OFDMA) and in 3G using orthogonal spreading codes. The benefit of the non-orthogonality is that the sum spectral efficiency (bit/s/Hz/cell) can be increased, if the increased interference can be dealt with using clever signal processing, such as successive interference cancelation. But from a practical standpoint, it matters a lot if the performance gain is 1% or 1000% (~10x). The former is negligible while the latter would constitute a paradigm shift.

Massive MIMO is also based on non-orthogonal access

The use of many antennas has become a natural part of 5G. When having an antenna array, the users can be spatially multiplexed, instead of assigned to orthogonal time-frequency resources. This is what we call Massive MIMO and it is a non-orthogonal multiple access scheme; if you direct a spatial beam towards each user, there will be interference leakage between the beams. MIMO schemes have been around for decades, for example, under the name spatial division multiple access (SDMA). There is both experimental and theoretical evidence that the widespread support for Massive MIMO in 5G is a paradigm shift when it comes to spectral efficiency, but nevertheless, it is not what most papers refer to when using the NOMA terminology.

Instead, the NOMA literature focuses on another aspect of the non-orthogonality: joint decoding of the interfering signals. It is known in information theory that weakly interfering signals should be treated as noise, while strongly interfering signals should be decoded jointly with the desired signal (or using successive interference cancelation). Hence, the methods considered in the NOMA literature are mainly effective in systems with strongly interfering signals.

Since Massive MIMO is used in 5G from the beginning, while NOMA remains to be standardized, a natural question arises:

Do we need other non-orthogonal access schemes than Massive MIMO in 5G?

One of the key motivating factors for Massive MIMO is the favorable propagation, which basically means that the base station has sufficiently many antennas to beamform so that the users’ channels become nearly orthogonal. One can think of it as transmitting narrow beams that lead to low interference leakage. Under these conditions, there are no strongly interfering signals, which implies that no additional NOMA features are needed to deal with the interference. We have shown this analytically in two papers: one about power-domain NOMA and a new one about code-domain NOMA.

Although these papers show that NOMA can usually not improve the sum spectral efficiency, there are indeed some special cases when it can. In particular, this happens in situations when the number of antennas is insufficient to achieve favorable propagation. This can, for example‚ happen in line-of-sight scenarios where the users are closely spaced and therefore have very similar channels. However, in my experience, the NOMA gains are marginal also in these cases. When writing the two papers mentioned above, we had to spend much time on parameter tuning to find the cases where NOMA could provide meaningful improvements. With this in mind, it is fully plausible that NOMA will never be used in 5G, at least not for increasing the spectral efficiency (it could be useful for other purposes, such as grant-free access).

What about beyond 5G systems?

When it became clear that NOMA wouldn’t play any big role in 5G, the research focus has shifted towards beyond 5G systems. One of the prominent new advances on non-orthogonal access is called rate splitting. The recent paper “Is NOMA Efficient in Multi-Antenna Networks?” provides a pedagogical overview. The paper also makes a case for that rate splitting methods combines the best aspects of conventional NOMA and Massive MIMO, in a way that guarantees a higher sum spectral efficiency. While it is true that a well-designed rate splitting system can never be worse than conventional Massive MIMO with linear processing, the key question is: how large performance gains can be achieved?

In the overview paper, the case for rate splitting is based on multiplexing gain analysis. This means that the sum spectral efficiency (bit/s/Hz) is studied when the transmit power P is asymptotically large. Different access schemes will achieve different spectral efficiencies, but they all behave as M log2(P)+C, where the factor M is the multiplexing gain and C is a constant. When P is large, the scheme that achieves the largest multiplexing gain is guaranteed to give the largest spectral efficiency, irrespective of the value of C.

If the channels are known perfectly, then a single-cell Massive MIMO system achieves the maximum multiplexing gain (it is equal to the minimum of the total number of transmit antennas and the total number of receive antennas). However, if the channels are known imperfectly, then the multiplexing gain is reduced when using linear processing and the above-mentioned paper shows that the rate splitting approach added to achieve a larger multiplexing gain than conventional Massive MIMO. This is mathematically correct, but there is one catch: the power used for channel estimation is assumed to grow more slowly than the power P used for data transmission. However, in practice, we could use the same power for both estimation and data transmission; hence, in the large-P regime considered in the multiplexing gain analysis, we will have perfect channel knowledge. Rate splitting cannot increase the multiplexing gain in that case.

That said, rate splitting can still improve the sum spectral efficiency compared to Massive MIMO in practical setups (at least it cannot be worse), but we should not expect any paradigm shift. Massive MIMO is already utilizing the multiplexing gain to push the spectral efficiency to new heights in 5G. Further improvements are possible by increasing the number of antennas, while it cannot be achieved by refining the access scheme. That could only increase the parameter C, not M.

If you want to learn more about NOMA and rate splitting, I recommend the following episode of our podcast:

In Fig. 1, you assume CDMA is a kind of NOMA, right? Correct me if I was wrong.

Yes, CDMA if non-orthogonal codes are utilized. It is also known as code-domain NOMA. 3G typically used orthogonal codes.

The idea of non-orthogonal multiple access is actually from CDMA. Yes, CDMA is a code-domain NOMA.

Great discussion! You said that NOMA with massive MIMO does not bring practical benefits. However, is it possible to jointly use rate splitting and massive MIMO? Does it make sense?

As I try to explain in the last section of the blog post, rate splitting can increase the spectral efficiency (at least it won’t be worse, if it is optimized properly). But the gains are not in terms of achieving a larger multiplexing gain, as sometimes claimed in the rate splitting literature, but SINR gains due to removing some interference. I personally believe that the gains are not worthwhile in practical setups, since the small spectral efficiency gain comes at the prize of coupling the coding of all the user’s data transmissions.

Sir, your podcast regarding I have gone through already, but your blog post cleared more here regarding NOMA and rate splitting. Kindly always try to publish blog post after podcast for same topic, it is my request.

NOMA can improve the sum spectral efficiency when the number of antennas are insufficient. In that case, how can we define “insufficient”? I mean how much antennas can be considered as sufficient?

It is hard to answer this precisely because it depends on the array geometry, user locations, and propagation environment.

You can check out Figure 1 in https://arxiv.org/pdf/2003.01281.pdf

With 64 antennas and line-of-sight channels, the angle difference to the users must be below 5 degrees if NOMA should be useful.

My experience is that one can purposely create examples like this where there is a performance gain but if one puts out the users randomly, it occurs very seldom so the average gain is negligible.

Considering the random environment and random behaviors of the users, it should hardly occur that users stand within 5 degrees range. Therefore it looks impractical, but I will study on that paper to dig into it and understand.

Thanks for your great discussion !

Thank you so much, you are the best when you give info..

Is sum data rate more important than the individual data rate?

NOMA sum rate is better than OMA. However, the individual data rate for far user is usually small compared to far user data rate in OMA.

Does sum rate ensure higher capacity for each user?

Whether to consider the sum rate or individual rates depend on what you as the network-designer thinks is the most important metric.

NOMA doesn’t achieve a larger sum rate than OMA, in basic scenarios with single-antenna equipment and perfect CSI. However, the rate region is larger with NOMA, which means that one can choose how to distribute the sum rate between the users. I recommend you to read Section 6.1 in Fundamentals of Wireless Communications, which shows these things (but using older terminology – NOMA isn’t new).