One question that I often receive from reviewers is: What is the difference between Cell-free Massive MIMO and the C-RAN technology? In this blog post, I will provide my usual answer to this question and then elaborate on why reviewers are not always satisfied with this answer.

In a nutshell, a base station consists of an antenna, a radio unit, and a baseband unit (or multiple antennas and radios, in the case of MIMO). These are traditionally co-located as follows: the antenna is deployed in a tower, the baseband unit is placed underneath, and the radio is located at one of these places.

Cloud Radio Access Network (C-RAN)

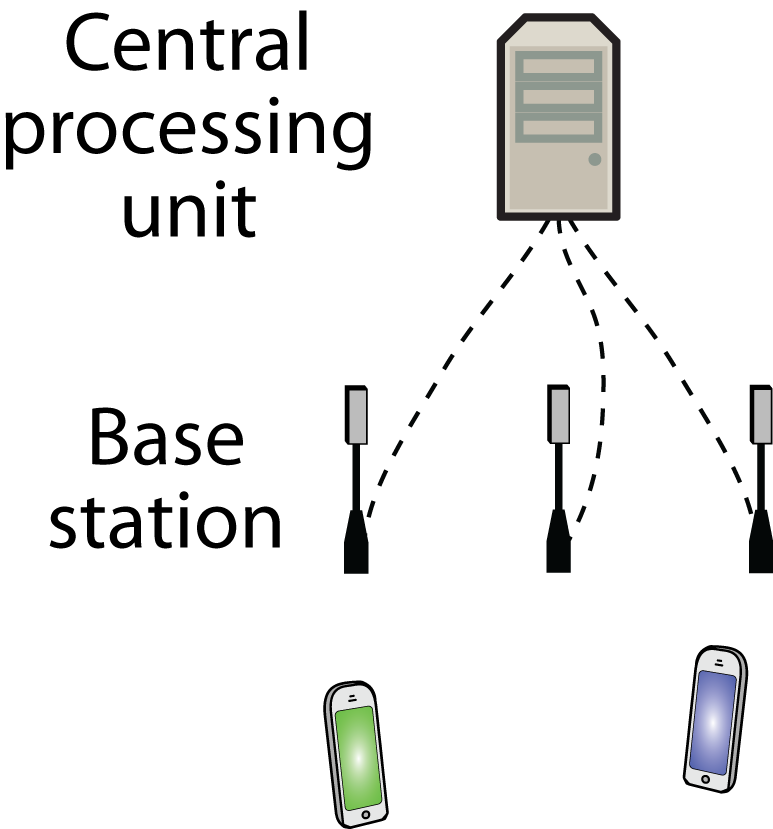

C-RAN is an alternative deployment architecture where the baseband units are deployed at other locations, “in the cloud” so to say. The C in C-RAN can also stand for “centralized”, which might be a preferable terminology since the word “cloud” is often associated with the use of general-purpose compute hardware. In C-RAN, the baseband processing tasks of many neighboring base stations are carried out in a single central processing unit (CPU), which might use specialized or general-purpose hardware. For latency reasons, the physical distance between a base station site and its CPU shouldn’t be more than a few kilometers. By pooling the processing resources in this way, it is possible to reduce the total hardware expenditure, particularly if some base stations often have low traffic load so the total hardware capability can be reduced by sharing. In other words, one can build a network that can handle high traffic anywhere, as long as it doesn’t happen everywhere at the same time. Another important benefit is that the neighboring base stations can more easily cooperate when their processing is anyway carried out at the same CPU.

In summary, C-RAN is a deployment architecture. Different air interfaces (e.g., 4G, 5G) can be implemented on top of the C-RAN architecture, making use of different physical layer techniques (e.g., Massive MIMO, coordinated multipoint).

Cell-free Massive MIMO

This is a new physical layer technology where the neighboring base stations (called access points in the related literature) are jointly serving the users in their vicinity. By carrying out coherent signal processing, the signal power can be boosted, the interference can be mitigated, and the cell boundaries are alleviated. We are essentially creating a wide-area network that is free from cells. There are different forms of Cell-Free Massive MIMO, characterized by where the baseband processing is carried out. It can either be fully centralized at the CPU or distributed as far as possible to pre-processing units located at each access point. The following video elaborates on these different implementation levels:

My answer

The simple answer to the question posed in the first paragraph is that C-RAN is a network architecture and Cell-free Massive MIMO is a physical-layer technology that can be deployed using either C-RAN or some other architecture. It is not a matter of selecting one or the other, but both can coexist and benefit from each other. My group is presenting a paper at ICC 2022 that exemplifies how to optimize the operation of Cell-free Massive MIMO when it is implemented in C-RAN.

The weak spot with my answer is that C-RAN was proposed already in 2011, based on the needs of the industry to consolidate their network assets, and a large amount of academic research has been carried out since then. Some of the papers on C-RAN have considered physical-layer techniques that resemble Cell-free Massive MIMO but without using that terminology. Some people might rightfully associate C-RAN with cell-free-like processing schemes, because they fit so well together. After all, Cell-free Massive MIMO is a revamp of Network MIMO that makes it more practical.