5G networks are supposed to be fast, to provide higher data rates than ever before. While indoor experiments have demonstrated huge data rates in the past, this has been the year where the vendors are competing in setting new data rate records in real deployments.

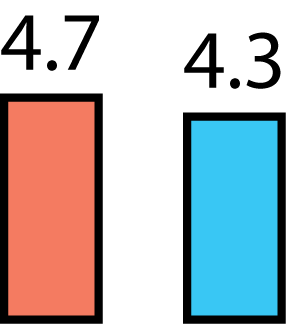

Nokia achieved 4.7 Gbps in an unnamed carrier’s cellular network in the USA in May 2020. This was achieved by dual connectivity where a user device simultaneously used 800 MHz of mmWave spectrum in 5G and 40 MHz of 4G spectrum.

The data rate with the Nokia equipment was higher than the 4.3 Gbps that Ericsson demonstrated in February 2020, but they “only” used 800 MHz of mmWave spectrum. While there are no details on how the 4.7 Gbps was divided between the mmWave and LTE bands, it is likely that Ericsson and Nokia achieved roughly the same data rate over the mmWave bands. The main new aspect was rather the dual connectivity between 4G and 5G.

The high data rates in these experiments are enabled by the abundant spectrum, while the spectral efficiency is only 5.4 bps/Hz. This can be achieved by 64-QAM modulation and high-rate channel coding, a combination of modulation and coding that was available already in LTE. From a technology standpoint, I am more impressed by reports of 3.7 Gbps being achieved over only 100 MHz of bandwidth, because then the spectral efficiency is 37 bps/Hz. That can be achieved in conventional sub-6 GHz bands which have better coverage and, thus, a more consistent 5G service quality.